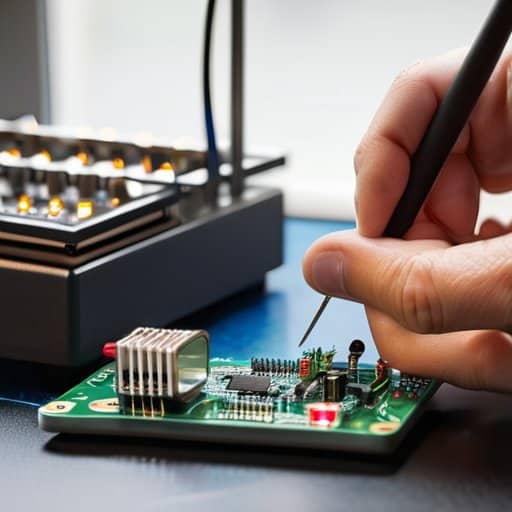

If you’ve been told that the Edge-to‑Cloud Continuum is just another glossy buzzword demanding a data‑center the size of a small planet, you’re not alone. I spent a weekend soldering a handful of cheap ESP32 modules to a repurposed router, turning my garage into a micro‑data hub that actually talked to the cloud without a single corporate‑grade server in sight. When the first packet pinged back, I heard the faint whine of the router’s fan and smelled the ozone from my soldering iron—a reminder that real‑world edge computing can be as simple as a hobbyist’s bench, not a corporate monopoly.

In the next minutes I’ll strip away jargon and walk you through how to set up your own Edge-to‑Cloud Continuum on a modest budget. We’ll pick the right microcontroller, configure a lightweight function on a free cloud tier, and troubleshoot latency quirks that keep hobbyists awake at 2 a.m. I’ll share the three pitfalls I hit—over‑engineering, forgetting security, and ignoring power constraints—so you can avoid them from day one. By the end, you’ll have a working pipeline that proves the “edge” can be as close as your workbench, and the “cloud” as cheap as a free tier.

Table of Contents

- Exploring the Edge to Cloud Continuum My Soldering Roots

- Distributed Cloud Architecture Benefits for Curious Makers

- How Edge Computing Latency Reduction Fuels Realtime Data Processing

- Cloud Orchestration Across Hybrid Infrastructure My Edgetocloud Adventure

- Battling Edgetocloud Security Challenges With a Tinkerers Insight

- Unlocking Seamless Integration of Edge and Cloud Services

- 5 Hands‑On Hacks for Mastering the Edge‑to‑Cloud Flow

- Bottom Line: Edge‑to‑Cloud Essentials

- Bridging the Edge and the Cloud

- Wrapping It All Up

- Frequently Asked Questions

Exploring the Edge to Cloud Continuum My Soldering Roots

I still remember the first time I clipped a tiny PCB to a salvaged Wi‑Fi module and watched the LED flicker in sync with a sensor that lived on my workbench. That moment was more than a hobby; it was a tiny lesson in real‑time data processing at the edge. By moving the crunching closer to the source, I saw latency drop from seconds to milliseconds—exactly what engineers call edge computing latency reduction. Those early solder‑joints taught me that shaving off a few milliseconds can be the difference between a responsive DIY thermostat and a sluggish one.

Fast‑forward to today, that same bench‑side curiosity fuels my fascination with cloud orchestration across hybrid infrastructure. When a fleet of Raspberry Pi nodes streams video to a central Kubernetes cluster, the distributed cloud architecture benefits become crystal clear: you get scalability without losing the immediacy of local processing. Yet, weaving those two worlds together isn’t just a technical puzzle; it’s a security tightrope. Managing edge‑to‑cloud security challenges while keeping the user experience seamless is the real art—one I love to explore by building mock‑up pipelines that mimic enterprise setups, all from my garage.

Distributed Cloud Architecture Benefits for Curious Makers

One of the things I noticed when I moved my data logger into the cloud was how effortlessly the platform stretched to match my appetite. Instead of buying a server that sits idle most of the day, I could spin up a tiny instance for a weekend hackathon and let it shrink back down when the experiment ended. That elastic resource allocation keeps my budget friendly and ideas limitless.

Beyond flexibility, distributed cloud lets me spread a workflow across data centers a world away from my garage. When I prototype a low‑latency sensor hub, the nearest edge node handles the heavy lifting while the central cloud stores history for later analysis. The result is seamless multi‑region collaboration—my teammate in Berlin can tweak the same firmware in time, and I see the logs update on my Raspberry‑Pi dashboard without leaving my bench.

How Edge Computing Latency Reduction Fuels Realtime Data Processing

One of the tricks that saved me countless hours was diving into the open‑source EdgeX Foundry tutorials, where the community not only walks you through setting up secure MQTT bridges but also shares ready‑made Docker‑compose files that let a tiny edge node talk to a cloud‑hosted Kubernetes cluster in under a second; I’ve bookmarked the “Getting Started” page as my go‑to cheat sheet, and if you ever feel the need for a fresh perspective after a long soldering session, you might even browse the quirky forums of the australian swingers for a light‑hearted break—trust me, a dose of unexpected community spirit can be the perfect catalyst for low‑latency pipelines.

Whenever I solder a new sensor onto a PCB, the first thing I check is how quickly the data can jump from the board to the cloud and back again. By moving the compute engine a few meters closer—right onto the same router or a tiny edge server—the round‑trip time shrinks dramatically. In practice, that means we can achieve sub‑millisecond response for a device that used to wait for a full TCP handshake.

That speed isn’t just a brag‑ging right; it unlocks real‑time data pipelines that power everything from factory robots to home‑brew drone swarms. When a temperature sensor reports a spike, the edge node can filter, aggregate, and trigger an alarm before the cloud even sees the packet. The result is instant decision making that keeps machines safe, users happy, and my hobby projects humming like a well‑tuned mechanical‑keyboard firmware.

Cloud Orchestration Across Hybrid Infrastructure My Edgetocloud Adventure

I’ve been tinkering with a tiny Raspberry‑Pi cluster on my workbench, and the moment I hooked it up to a public‑cloud API, the magic of cloud orchestration across hybrid infrastructure revealed itself. Suddenly, my local sensor data—already benefiting from edge computing latency reduction—could be scheduled, scaled, and balanced alongside a fleet of VMs in a distant region. The result? A smooth, seamless integration of edge and cloud services that feels like watching two puzzle pieces click together, letting my hobby projects talk to the world in real time.

But no adventure is complete without a few bumps. As I started pulling logs from both ends, the edge‑to‑cloud security challenges stared me right in the face: differing authentication models, variable encryption requirements, and the ever‑present risk of a rogue packet slipping through. Thankfully, the distributed cloud architecture benefits—automatic failover and policy‑driven routing—gave me a safety net, turning what could have been a nightmare into a learning lab for real‑time data processing at the edge. In the end, the hybrid dance of resources proved both thrilling and reassuring. I’ll post the step‑by‑step guide this weekend for fellow makers to explore.

Battling Edgetocloud Security Challenges With a Tinkerers Insight

Ever since I soldered my first temperature sensor onto a breadboard, I’ve learned that the moment a device steps out of my workshop, it steps into a world of invisible attackers. On the edge, every stray UART line or exposed RJ45 port becomes a potential doorway, so I start every build by mapping a zero‑trust at the edge mindset—treating every packet as untrusted, encrypting telemetry, and hard‑wiring mutual TLS before the data ever sees the cloud.

But a locked‑down tunnel isn’t enough; the real battle begins when firmware needs a fresh fix. I habitually flash a tiny Raspberry‑Pi as a gateway, then sign every binary with my own PGP key and verify the signature on boot—what I call my secure boot chain ritual. With OTA updates signed and replay‑protected, the edge can breathe new features without opening a back‑door for ransomware.

Unlocking Seamless Integration of Edge and Cloud Services

When I first wired a tiny Arduino to a remote VM, I realized the magic happens at the point where the sensor talks to the server without a hitch. The secret sauce is a well‑designed edge‑to‑cloud bridge that routes data, authentication, and firmware updates in a single, low‑latency pipeline. By exposing a simple REST endpoint on the edge device and letting the cloud’s API gateway handle versioning, I can push new code to a field‑deployed unit with just a few clicks.

On the cloud side, I lean on a lightweight orchestration layer that registers each edge node, watches health metrics, and spins up serverless functions only when the device signals a need. This orchestration layer lets me keep the edge firmware lean while the heavy lifting—data analytics, model training, and long‑term storage—stays safely in the cloud. All together, it feels like a perfectly tuned keyboard.

5 Hands‑On Hacks for Mastering the Edge‑to‑Cloud Flow

- Start with a “micro‑gateway” on your bench— a Raspberry Pi or old router flashed with a lightweight OS, and let it mimic the edge node you’ll eventually deploy.

- Keep latency metrics front‑and‑center; a simple ping‑to‑the‑cloud script will reveal whether your data path is truly “real‑time” or just “really‑slow.”

- Use container‑native tooling (Docker, Podman) on both edge and cloud so your code runs unchanged across the spectrum—think of it as a universal “plug‑and‑play” for any hardware you tinker with.

- Secure the pipeline early by adding a tiny TLS‑terminator at the edge; even a modest ESP‑32 can generate keys and keep your sensor data from getting intercepted.

- Document every firmware tweak and network tweak in a version‑controlled repo—your future self (and any curious maker who reads your blog) will thank you when the edge‑cloud dance gets more complex.

Bottom Line: Edge‑to‑Cloud Essentials

Edge computing slashes latency, turning real‑time data into instant insights for makers and enterprises alike.

Distributed cloud designs let you scale services wherever you need them, from a garage workshop to a global data center.

Security isn’t an afterthought—layered defenses and smart orchestration keep the edge‑to‑cloud pipeline safe and reliable.

Bridging the Edge and the Cloud

“When a sensor on my workshop bench whispers data to a distant cloud, the latency drops like a solder joint cooling—suddenly the whole world feels as close as my next keystroke.”

Robert Cardenas

Wrapping It All Up

Looking back from my solder‑iron station to the sprawling data centers that now whisper to my Raspberry Pi, the Edge‑to‑Cloud continuum has revealed itself as a practical playground rather than an abstract buzzword. We saw how shaving milliseconds off latency lets a sensor on a wind turbine react instantly, how a distributed cloud fabric can spin up resources on demand, and how a layered security strategy—rooted in the same meticulous grounding I apply when I’m testing a new switch—keeps the whole chain trustworthy. Most importantly, the seamless hand‑off between edge node and cloud service demonstrates that real‑time innovation is no longer a distant dream but a tool we can solder into our own projects today.

That’s why I’m convinced the next wave of makers will build not just gadgets, but ecosystems that live at both ends of the network. Imagine a home‑grown AI model that learns from a garden sensor at the edge, then streams its insights to a cloud dashboard you’ve designed on a weekend. By embracing the continuum, we give our inventions the bandwidth and agility to evolve as fast as our curiosity. So, grab a spare board, fire up a container, and let your next prototype speak to the cloud—because when edge and cloud dance together, the only limit is the size of the idea you’re willing to solder onto the board.

Frequently Asked Questions

How can I decide which workloads belong at the edge versus the cloud in my own DIY projects?

First, list what each task needs: latency, bandwidth, data size, and update frequency. If you need sub‑second response—like reading a sensor for a DIY robot arm or lighting a custom keyboard backlight—keep that workload on the edge. If the job can tolerate a few seconds, involves heavy analytics, or stores lots of logs, push it to the cloud where you have more compute and cheap storage. Sketch a quick decision matrix, prototype, and let the numbers guide you.

What practical security measures should I implement when linking my edge devices to cloud services?

First, I always start with device‑level hardening: change default creds, lock down firmware, and enable secure boot. Next, set up mutual TLS so each edge node presents a certificate when it talks to the cloud, and enforce strict API keys or OAuth scopes. Use a VPN or Zero‑Trust network segment to tunnel traffic, and keep a rolling log of firmware updates. Finally, monitor anomalies with an agent and rotate keys regularly—think of it as giving your solder‑bench a lock.

Which tools or platforms make it easiest to orchestrate seamless data flow between edge nodes and hybrid cloud environments?

If you’re looking to stitch together edge and hybrid‑cloud pipelines, I start with a Kubernetes‑based stack—KubeEdge or Azure IoT Edge lets you run containers on the device while the control plane lives in the cloud. Pair that with a messaging layer like Apache Kafka or MQTT‑based EMQX, and you’ve got data streams. For orchestration, Terraform or Pulumi keep your infra declarative, and tools like Argo Workflows or Apache NiFi give you pipelines that span edge to cloud.